|

Getting your Trinity Audio player ready...

|

Ever since Generative AI (GenAI) chatbots and content creation tools hit the scene back in 2022, their implications for education have been profound. On the ground, I’ve seen a lot of discussion from teachers and university professors about the nature of assessment and what they might do to either integrate this new technology into their work, or pretend that it doesn’t affect them. For those of us who rely on the written word for assessment (i.e. essays, reports, etc.) it became very clear very quickly that GenAI could easily create such works without breaking a sweat. Sure, the outputs feel a bit ‘off’ now and then and contain way too many bullet points and emojis, but for many tasks, it gets the job done.

But what about video assignments and presentations you say? I’m safe!

Written scripts and even slide contents are super easy to create. All a student needs to do is is give ChatGPT, Claude, CoPilot or similar tool your assignment instructions and what the GenAI should do with it, and they’re all set. With new video tools like OpenAI’s Sora and Google’s Veo, creating videos is also a possibility. So what do we do?

No more policing.

As an educator, I’m not here to tell students not to take shortcuts. As a student myself, when I don’t see the value of a task (whether I have the expertise to understand its value at the time or not), I’ll definitely take a shortcut if its available to me. I still agree with the mantra that students wouldn’t cheat if grades didn’t exist, but here we are.

Enter PETRA AI (the Permissive and Transparent use of AI in education). My goal in creating PETRA was not to solve this issue, but to provide options to teachers and students to provide bidirectional and transparent and use of GenAI in in educational settings.

These technologies are being used – fact. For me, I don’t want to hide my head in the sand nor vilify my students who use it, because I use it literally every day. The only other option is to embrace it and share with my students how I think it should be used, and allow them the chance to share how they did use it.

How does it work?

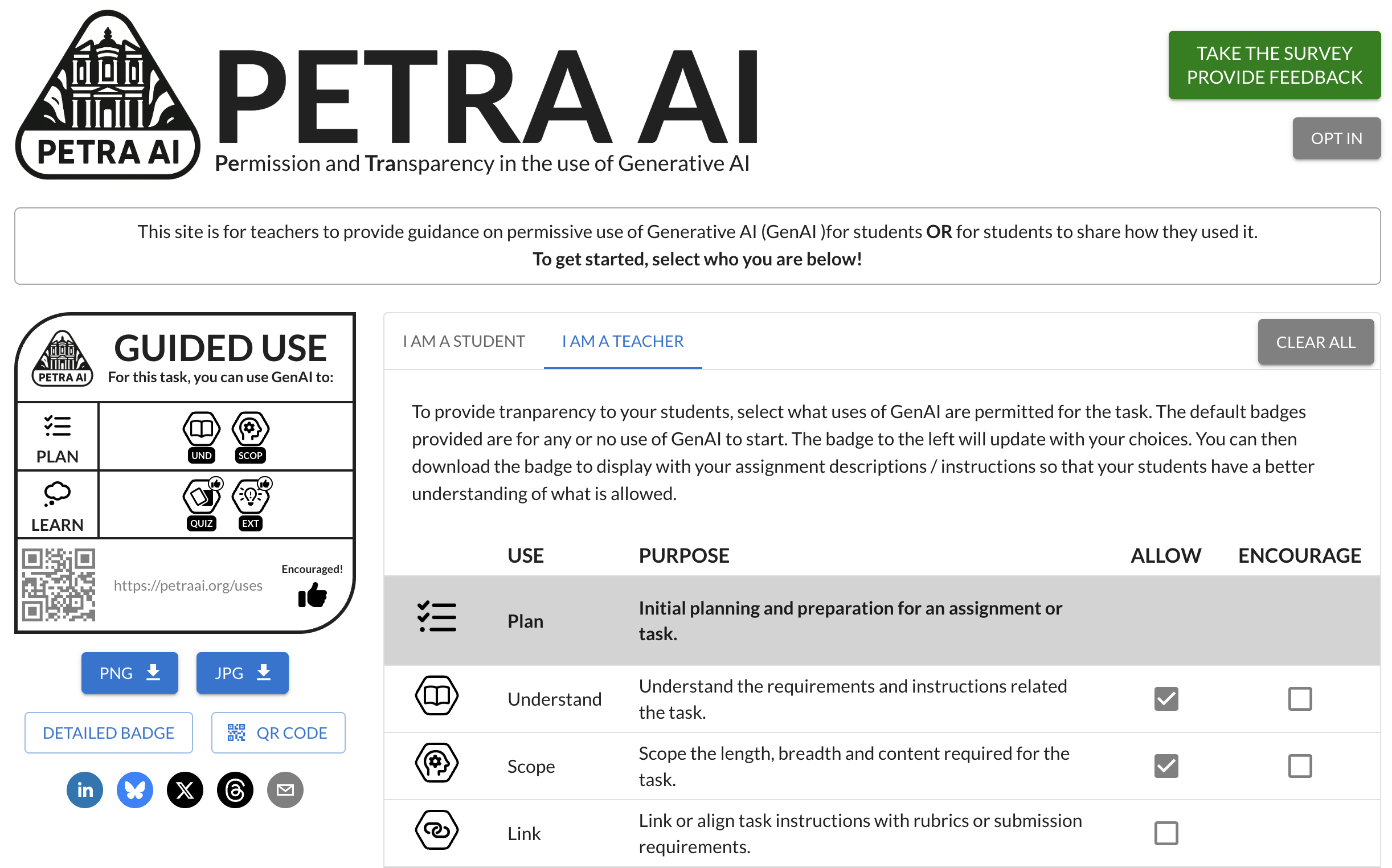

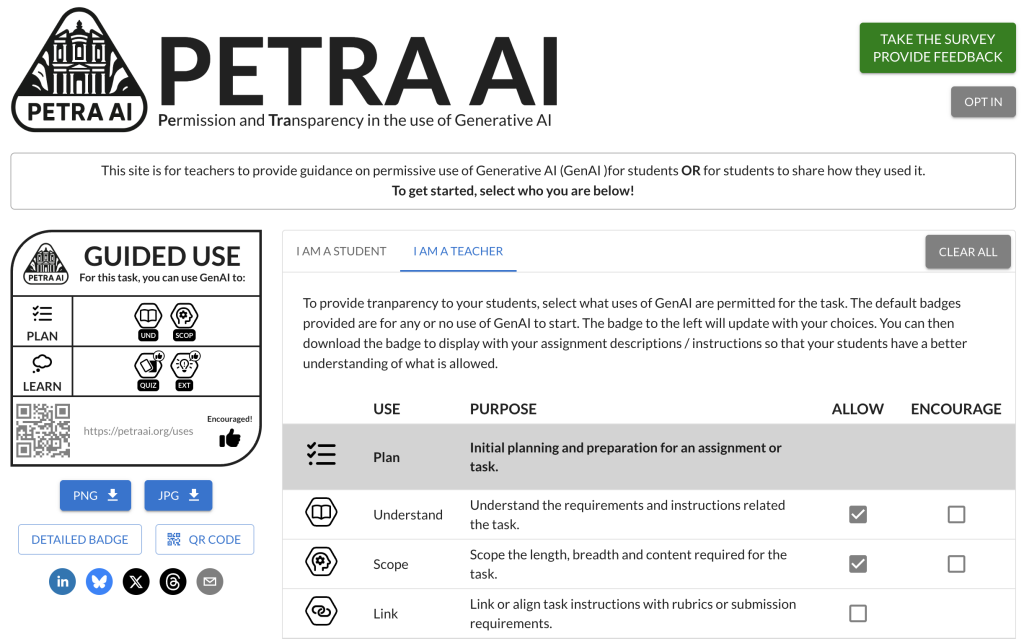

It’s pretty easy – you go to http://www.petraai.org/ and select if you’re a teacher or a student, then you just click checkboxes to make and download badge that:

- Tells students what uses of GenAI are appropriate or encouraged for a task (Teacher), OR;

- Tells your teacher know how you did use GenAI for a task (Student)

The badges are super quick to create. Then you just download a PNG or JPG and stick it in your assignment. Done.

It can also go both ways – a teacher makes a badge to show students what’s allowed or encourage THEN asks students to make their own badge to share how they used it after the task is done. Some might say it’s a declaration, and that is another conversation.

Should students declare?

There is an open question about whether we should require students to declare their use of these tools. I don’t think we should require them to do so, personally. When considering all the fantastic discussions around academic integrity and the need to ensure that a student has actually created what they are submitting, I believe there are two sides to this.

The first is literacy around what academic integrity involves. Of course don’t cheat – but a student doing research then asking ChatGPT to compile that research into a report or presentation script may not be seen as cheating (and I might not consider it cheating either). It’s the assumption on a part of teachers that students’ submissions are created by that human student – and this assumption has to go out the window given the advent of these tools. In maths education, it was always “show your work”, and showing your work when creating a written essay or report while using GenAI would be near impossible, so that’s where transparency and modelling come in.

The second aspect is what we do in the face of losing the long-held assumption that what students submit is created by them. Do we automatically assume that all students haven’t written what they submit or post online? But does it even matter? Of course it matters, especially if our aim as educators is to gather evidence that learning has occurred – if submissions are simply generated by chatbots, then our evidence and our insight into our students’ learning is lost. This is where fine-grained permissive use of GenAI and a declaration of how it is used can help – but most importantly the modelling of how we as educators use it.

Liars gonna lie

So obviously there’s a quick way around this – to lie, which humans do all. the. time.

What we can do as educators though, is to admit this reality – and to be transparent with our students in how we use GenAI as well and to trust them in their own use.

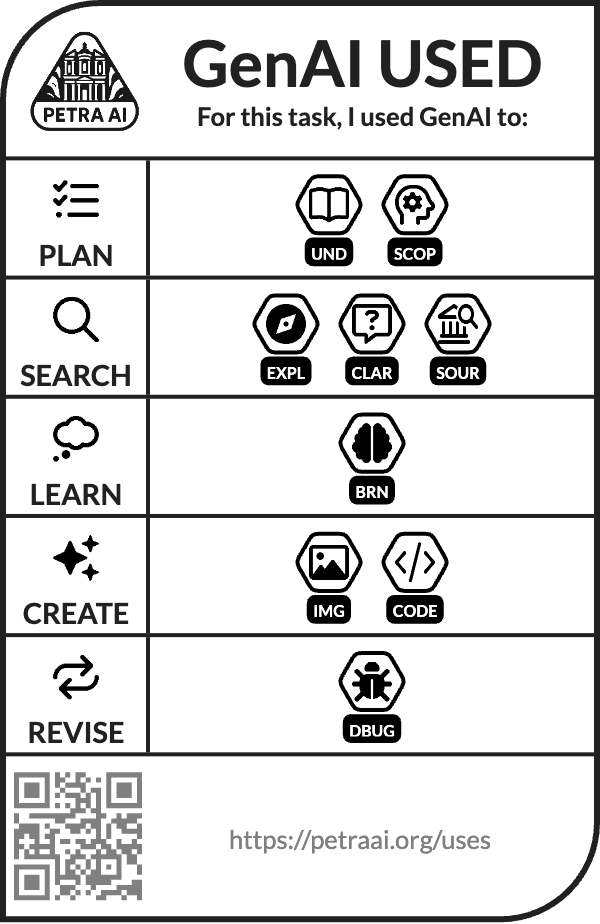

As an example, the badge to the right is my declaration of how I created the PETRA AI website. I used ChatGPT image creation to create the logo – I wrote most of the React code myself, but when I needed to use Material UI components I wasn’t familiar with, or when I needed to chain state data across the app, I asked Claude and ChatGPT and Copilot to generate that for me. Did I cheat? I don’t think so. I just used the tools available to me to scaffold and suppor the work I was already doing to manifest ideas in my brain.

So my transparency around my own use has to model the ethical ways in which GenAI can be used – a practice that has the potential to filter through to our students.

Why no colours?

I’ve seen a few other frameworks based on stop lights – however a stratified ‘red light’ / ‘green light’ did not work for me, just like it doesn’t work in identifying at-risk students through learning analytics because the world is more nuanced than coloured boxes. The other issue is colours have meaning. If we say that we don’t allow GenAI use at all, and label that red, our monkey brains see blood and will attribute the use of GenAI as a bad thing. On the other hand, our monkey brains see green as life and will inherently compare the two colours, so I decided to leave colour out of it.

Another issue is I’m colourblind 😉

Why do we need this?

GenAI is here to stay whether we like it or not, and I’d rather be on the right side of history and permit and encourage its use (whether limited or unlimited) than ban it all together because we all know what banning something does.

So this is the idea I came up with – a dynamic, badge-based system to increase transparency around the use of GenAI, and maybe even the literacy around its affordances. The framework I propose is not exhaustive – hell, there’s probably thousands of ways GenAI can be used in education that I didn’t think of, but this is a step in the right direction towards both understanding how our students are using it, and building our own literacies and shared understandings of technology use.

Discover more from Stoo Sepp

Subscribe to get the latest posts sent to your email.